In order to work as intended, this site stores cookies on your device. Accepting improves our site and provides you with personalized service.

Click here to learn more

Click here to learn more

My name is Jamie Portnoff, and I am the founder and principal consultant at JMP Consulting. JMP Consulting assists clients in the pharmaceutical industry to achieve and sustain compliance and improve overall performance in pharmacovigilance (PV) and related functions like quality, medical information and regulatory affairs. Before founding JMP Consulting, I worked in the pharmaceutical industry. Not many management consultants working in PV have hands-on, real-world PV experience; this experience means I understand the realities of day-to-day work in and around PV, and how challenging it can be to deliver against requirements and expectations. In my earliest days in industry, I especially enjoyed working with people and on projects, and I soon realised that I wanted to marry up my problem solving and analytical skills with my practical industry knowledge, and after a few years of working with big consultancy companies I decided to start JMP Consulting.

Let us look at the last three decades.

In the 1990’s there were basic PV safety database systems, such as ArisG, ArisLite and ClinTrace. Fax machines were a huge part of the tech that enabled PV processes, with a high volume of incoming and outgoing data by fax. Processes were extremely paper-intensive and were designed to accommodate transactional work, such as processing of cases and putting aggregate reports together; everything was very compliance-focused. Consequently, there was demand for full-time roles dedicated to paper management, typing up documents and data entry. Teams were typically regionalized, and everything was done “onshore”.

In the 2000’s, PV technology became more sophisticated, more globally oriented. There were advances in what the technology could do, and consolidation of major tech players due to M&A activity. Paper-based processes began to give way to more digitization and electronic workflow management. Analytics tools become more prevalent and more user-friendly. However, a typical PV department was still very paper-intensive. Some of the regionalized models began to consolidate to one system, one process, and one organization, particularly between US and Europe.

Throughout this decade, more stringent regulatory requirements were continually being introduced, such as the Risk Evaluation and Mitigation Strategy (REMS), as well as Volume 9a. Consequently the bar was being raised for the calibre of work, and quality management expectations were increasing. We saw more focused teams dedicated to signal detection and risk management, and specialized teams emerged to manage increasing business system needs as the regulatory requirements led to increasingly complex systems. Dedicated vendor oversight teams were also required as companies began to work offshore with vendors.

Over the last decade, good pharmacovigilance practices (GVP) were introduced in the European Union (EU). The Qualified Person for Pharmacovigilance (QPPV) is not a new requirement, but it became clear that this person needs a whole team around them to support them and help shoulder the workload.

Offshore work has grown in magnitude, partnerships between companies have become an integral part of how business is done, and next generation technology is rolling out to improve efficiency and consistency. Safety systems have become truly global, enabling a scalable end-to-end safety process within a single system.

Big changes are coming with PV technology, which will drive major shiftsin the way we think about how PV work gets done. We have seen evolution in PV technology before, but it seems this time around will be more impactful than anything from the past 20 years.

With the advent of next-generation technology, new hard skills will be required, such as understanding of machine learning, natural language processing and artificial intelligence. Organizations need to be able to manage transformation of the PV business effectively and regularly, and leverage advanced analytical tools to derive meaningful insights from various data sets. Additional ‘soft’ skills will also be needed, such as adaptability, flexibility, open-mindedness as well as the ability to ‘think outside the box’ to drive improvements through innovative thinking.

New roles within the organisation will emerge, with specific roles dedicated to:

Meanwhile, other roles will fade out and teams of people (in-house or outsourced) performing transactional activities will become a thing of the past.

From a process perspective – Processes must be highly scalable to accommodate growth in volume and complexity, and a blend of proven and cutting-edge technology is needed to support and enable this. A future-ready process has metrics to enable continuous improvement; it can efficiently evolve and adapt to simultaneously accommodate new regulations, innovative products and evolving stakeholder expectations.

From a technology perspective – Highly agile, flexible and robust, technology needs to be business-led with strong IS support and should be woven into an organisation’s processes, not vice versa.

From a people perspective – People in the organisation must accept increasing automation of processes – you can have the best technology in the world, but if the people in the team are rejecting it, it is not going to be successful. Well-managed resource models are also hugely important. The organisational structure must be designed around the business’ needs, not vice versa. Employees should offer more than one skillset and in return, they must have a pathway to develop professionally. It is critical that a team can approach things from different angles and can adapt to change – these days excelling in just one area is often not enough.

The challenges of implementing ePRO – part one?When it comes to documenting the advantages of using ePRO over paper in clinical trials, the benefits are clear.

With all the advantages to using ePRO over paper it seems to be a no-brainer to use ePRO whenever possible. However, it’s important to be mindful of certain considerations and challenges that come with the implementation of ePRO within your organization before jumping in.

Historically the implementation of electronic clinical systems in general has been challenging. In the majority of cases it requires the move from a paper-based process to an electronic system in an environment where the reliance has always been on paper, hindering the adoption of computer systems that are seen as alien. Taking EDC as an example, the response to an international survey cited that 46% of respondents identified inertia or concern with changing current process, and 40% identified resistance from investigative sites as the major causes for adoption delays [2].

ePRO is not immune to these challenges. In fact, it could be argued that ePRO is even more susceptible. While ePRO suffers from the traditional technical issues and user acceptance that EDC experiences, ePRO is also placed in the hands of potentially thousands of study participants many of whom may have little technical understanding. Additionally, ePRO relies on hardware (mobile device or tablet), cell network or WIFI connectivity, translation into the participant’s local language, multiple userbase (study teams, investigators and participants) and local helpdesk support, all of which comes with their own set of challenges and associated costs and few, if any, of which are encountered with EDC.

ePRO is one of the few electronic systems that directly collects source data and as a result comes under increased scrutiny from a data integrity and quality perspective, especially when used for primary or secondary end point data collection. The system must always be available in order to allow subjects to be activated on ePRO devices. If a participant leaves a clinical site without an active device, this can result in missed data which can be construed as a serious quality issue and perhaps put subject safety at risk.

Before I go into the more detailed challenges associated with ePRO, let’s first consider the financial costs.

On the surface of it, it would appear that implementing ePRO is significantly more costly than paper. The expense of the devices, associated logistics and data usage (monthly SIM costs), the licenses, helpdesk and translations all contribute to costs that range from hundreds of thousands of dollars to multi-million-dollar contracts per study.

When making a business case for ePRO it is important to take into account the hidden costs associated with paper in order to compare the two.

When conducting a full assessment, the gap between the cost of implementing ePRO vs paper reduces significantly. ePRO vendors have attempted to provide examples which result in paper diaries actually contributing more cost to a study budget than ePRO.

The business case for implementing ePRO should not be solely based on raw cost. This will likely result in failure to get agreement at the leadership level. You will find it easier to get acceptance if you can prove that ePRO costs are comparable to paper while also concentrating on the non-tangible benefits as, in the case of ePRO, these are the real reasons for its consideration. Increasing the quality of your data collection results in more confidence in that data, which in turn reduces the likelihood of rejection when submitted to the regulators (predominantly for primary and secondary end point data). Receiving the data in real time and reducing the need for data cleaning can aid the ability to get a product to market quicker by shortening the timelines to close the study, which in turn results in cost avoidance.

Many ePRO vendors will provide a cost calculator; a spreadsheet where the sponsor can plug in parameters associated with their study to provide an estimate of costs before engagement with the vendor. Only a small number of parameters are required to calculate a good estimate with the most important being the length of the study in months and the number of participants. The length of the study drives the helpdesk, data usage and PM costs, whereas the number of participants drives the device, logistics and shipping costs. There are other costs associated with the configuration of the system, translation, shipping, number of sites etc, however these are often negligible in comparison for larger studies.

In summary; it is important to build a business case for ePRO within your organization in order to assist in gaining acceptance at a leadership level. The business case should include areas of efficiency over paper together with examples of ePRO costs using the cost calculators provided by the vendors, as well as emphasizing the other benefits of ePRO, such as subject safety, compared to paper solutions.

In the past, ePRO implementations were customized pretty much from the ground up, coding the study specifics into the vendor’s study builder toolkit. This resulted in a huge effort required to validate the system to ensure errors and bugs were captured before studies went live. Inevitably despite all this testing some issues did make it through to the live study, causing frustration for the participants, investigators and study teams.

Over the past decade the systems have become more sophisticated. Less code is required during the implementation phase, which has been replaced with configuration. Vendors have also introduced library functionality which allow sponsors to define questionnaires up front that can be reused across studies. As the questionnaire is not rebuilt every time there is less opportunity to introduce errors. Additionally, the ability to reuse questionnaires from a library also results in less work by the vendor per study, less validation on behalf of the sponsor and can reduce the time and costs during the implementation phase.

It may also be possible to standardize other areas of functionality, perhaps the workflow as to when questionnaires are made available to the participants, or the alerting system, or the visit schedule. It may not be possible to standardize across therapeutic areas, but within a therapeutic area where multiple studies collect the same data this approach can result in substantially reduced timelines during the implementation phase, while reducing the risk of software errors on the studies.

ePRO can be implemented in a number of different modalities. In this blog, we are concentrating on provisioned devices; devices that are provided by the ePRO vendor at a cost to the study Sponsor, and “bring your own device” (BYOD); where a subject’s own device is used as the ePRO instrument. It should be noted that all studies require provisioned devices to a certain degree in order to cater for cases where a subject either does not own a compatible mobile device or does not own a mobile device at all.

When provisioning devices, ePRO vendors are responsible for the associated logistics such as software installation and shipping. Vendors are generally very knowledgeable when it comes to the customs regulations in many countries, including the average timelines required to get a shipment to a site.

In scenarios where competitive recruitment between sites is employed, it is particularly important to plan ahead. As it may not be possible to predict the number of subjects that will be recruited at a specific site and therefore the number of devices required at that site, it is necessary to purchase a sensible overage of provisioned devices. Although costly it ensures sites will not run out of devices.

With an increasing number of the world’s population now owning smart phones, BYOD was seen as the natural progression for ePRO. It reduces the costs and burden of acquiring devices and the associated logistics and also reduces the monthly costs for data usage. These costs do not completely disappear as a certain level of provisioning is required for those cases where participants don’t own a compatible smart phone. BYOD also reduces risks associated with not having enough devices on site, especially, as mentioned above, with competitive recruitment. However, BYOD does come with own set of unique challenges, mainly associated with data integrity and privacy. Some considerations might be:

There are clearly a lot of benefits of using BYOD over provisioned devices with more and more sponsors feeling comfortable moving into this space, however it is important to consider the implications before doing so.

In the next instalment of this blog, we will discuss some other challenges of implementing ePRO in your organisation, such as connectivity, translations, and end user acceptance testing. Keep an eye on our Opinion page for part two of the series, coming soon.

[1] ‘Electronic for Industry – Electronic Source Data in Clinical Investigations’, FDA 2013 http://www.fda.gov/downloads/drugs/guidancecomplianceregulatoryinformation/guidances/ucm328691.pdf

[2] ‘Welker JA. Implementation of electronic data capture systems: barriers and solutions.’ Contemp Clin Trials. 2007 May;28(3):329-36. doi: 10.1016/j.cct.2007.01.001. Epub 2007 Jan 11. PMID: 17287151.

Planning for successful User Acceptance Testing in a lab or clinical setting?User Acceptance Testing (UAT) is one of the latter stages of a software implementation project. UAT fits in the project timeline between the completion of configuration / customisation of the system and go live. Within a regulated lab or clinical setting UAT can be informal testing prior to validation, or more often forms the Performance Qualification (PQ).

Whether UAT is performed in a non-regulated or regulated environment it is important to note that UAT exists to ensure that business processes are correctly reflected within the software. In short, does the new software function correctly for your ways of working?

You would never go into any project without clear objectives, and software implementations are no exception. It is important to understand exactly how you need software workflows and processes to operate.

To clarify your needs, it is essential to have a set of requirements outlining the intended outcomes of the processes. How do you want each workflow to perform? How will you use this system? What functionality do you need and how do you need the results presented? These are all questions that must be considered before going ahead with a software implementation project.

Creating detailed requirements will highlight areas of the business processes that will need to be tested within the software by the team leading the User Acceptance Testing.

Requirements, like the applications they describe, have a lifecycle and they are normally defined early in the purchase phase of a project. These ‘pre-purchase’ requirements will be product independent and will evolve multiple times as the application is selected, and implementation decisions are made.

While it is good practice to constantly revise the requirements list as the project proceeds, it is often the case that they are not well maintained. This can be due to a variety of reasons, but regardless of the reason you should ensure the system requirements are up to date before designing your plan for UAT.

A common mistake for inexperienced testing teams is to test too many items or outcomes. It may seem like a good idea to test as much as possible, but this invariably means all requirements from critical to the inconsequential are tested to the same low level.

Requirements are often prioritised during product selection and implementation phases according to MoSCoW analysis. This divides requirements into Must-have, Should-have, Could-have and Wont-have and is a great tool for assessing requirements in these earlier phases.

During the UAT phase these classifications are less useful, for example there may be requirements for a complex calculation within a LIMS, ELN or ePRO system. These calculations may be classified as ‘Could-have’ or low priority because there are other options to perform the calculations outside of the system. However, if these calculations are added to the system during implementation, they are most likely, due to their complexity, a high priority for testing.

To avoid this the requirements, or more precisely their priorities, need to be re-assessed as part of the initial UAT phase.

A simple but effective way to set priority is to assess each requirement against the risk criteria and assign a testing score. The following criteria are often used together to assess risk:

Once the priority of the requirements has been classified the UAT team can then agree how to address the requirements in each category.

A low score could mean the requirement is not tested or included in a simple checklist.

A medium score could mean the requirement is included in a test script with several requirements.

A high score could mean the requirement is the subject of a dedicated test script.

A key question often asked of our team is how many test scripts will be needed and in what order should they be executed? These questions can be answered by creating a Critical Test Plan (CTP). The CTP approach requires that you first rise above the requirements and identify the key business workflows you are replicating in the system. For a LIMS system these would include:

Sample creation, Sample Receipt, Sample Prep, Testing, Result Review, Approval and Final Reporting.

Next the test titles required for each key workflow are added in a logical order to a CTP diagram, which assists in clarifying the relationship between each test. The CTP is also a great tool to communicate the planned testing and helps to visualise any workflows that may have been overlooked.

Now that the test titles have been decided upon, requirements can be assigned to a test title and we are ready to start authoring the scripts.

There are several different approaches to test script formats. These range from simple checklists, ‘objective based’ where an overview of the areas to test are given but not the specifics of how to test, to very prescriptive step by step instruction-based scripts.

When testing a system within the regulated space you generally have little choice but to use the step by step approach.

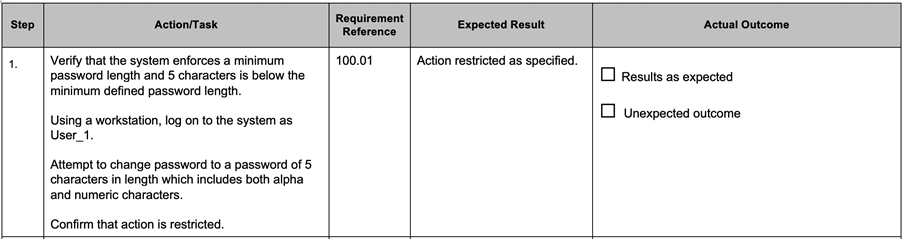

Test scripts containing step by step instruction should have a number of elements for each step:

A typical example is given below.

However, when using the step by step format for test scripts, there are still pragmatic steps that can be taken to ensure efficient testing.

Data Setup – Often it is necessary to create system objects to test within a script. In an ELN this could be an experiment, reagent or instrument, or in ePRO a subject or site. If you are not directly testing the creation of these system objects in the test script, their creation should be detailed in a separate data setup section outside of the ‘step by step’ instructions. Besides saving time during script writing, any mistakes made in the data setup will not be classified as script errors and can be quickly corrected without impacting test execution.

Low Risk Requirements – If you have decided to test low risk requirements then consider the most appropriate way to demonstrate that they are functioning correctly. A method we have used successfully is to add low risk requirements to a table outside of the step by step instructions. The table acts as a checklist with script executers marking each requirement that they see working correctly during executing the main body of step by step instructions. This avoids adding the low requirements into the main body of the test script but still ensures they are tested.

Test Script Length – A common mistake made during script writing is to make them too long. If a step fails while executing a script, one of the resulting actions could be to re-run the script. This is onerous enough when you are on page 14 of a 15 page script. However, this is significantly more time-consuming if you are on page 99 out of 100. While there is no hard and fast rule on number of steps or pages to have within a script, it is best to keep them to a reasonable length. An alternative way to deal with longer scripts is to separate them into sections which allows the option of restarting the current block of instructions within a script, instead of the whole script.

An important task when co-ordinating UAT is be fully transparent about which requirements are to be tested and in which scripts. We recommend adding this detail against each requirement in the User Requirements Specification (URS). This appended URS is often referred to as a Requirements Trace Matrix. For additional clarity we normally add a section to each test script that details all the requirements tested in the script as well as adding the individual requirement identifiers to the steps in the scripts that test them.

UAT is an essential phase in implementing new software, and for inexperienced users it can become time-consuming and difficult to progress. However, following the above steps from our team of experts will assist in authoring appropriate test scripts and leading to the overall success of a UAT project. In a future blog we will look at dry running scripts and formal test execution, so keep an eye on our Opinion page for further updates.

Industry leader interviews – Ajit Nagral. An insight into the world of scientific entrepreneurship?Ajit, please introduce yourself.

My career history is uncomplicated because straight out of college I started working for myself, and it has been that way for my entire career. I graduated in the US with a background in computing science and I decided I did not want to take the traditional path of finding a job and building a career that way. I always liked to be doing things differently. Coming out of college with very little money and a limited skillset, the reasonable thing to do was to get into software consulting because that did not require a whole lot of capital. Since then, I have founded four companies in the life science sector.

What led you to the science industry?

I ended up in the pharma and life science industry very early on by chance. After I graduated, I was in Boston with database and computing skills, and I started a small consulting company called Megaware. I found out there was a large life science vendor in Massachusetts which had some opportunities around a new life science product they were building. After a lot of persistence, the CIO reluctantly gave me 15 minutes to speak with him. I told him about my background and what I was attempting to do – he said they didn’t have anything for me within his organisation, but he would connect me to his counterpart at their analytical instrument division. I then got a contract to help this analytical instrument company build a part of their (then) new product, the first database driven instrument software, and that was my entry into the pharma world. That seems simple, but it was fortuitous and persistence more than anything else.

Can you give us a potted history of each of your companies?

My first company was Megaware. Back in those days labs were making the transition from VAX.VMS-based systems to PC-based systems. Enterprises had huge investments in VAX.VMS systems and in HP printers. We produced a product to be able to print from a VAX.VMS system onto a HP printer. It seems straightforward (but it was not!) because you are printing to a Windows-based printer but from a non-Windows system. We built a whole system to rectify this issue. I ran and built Megaware for 4 – 5 years and it was then sold to a boutique consulting company.

NuGenesis was my second company. We identified the issue that labs had several different instruments, from different vendors, but they did not talk to each other. You had large pharma companies, printing reams of paper, spending $millions of dollars on running labs across the globe and eventually all of that intellectual property ended up on paper! In those times when they submitted a new drug application, those applications were on tens of hundreds of pieces of paper which were carried to the regulatory agencies on trucks for review! It made no sense that something started out electronically and ended up on paper to be read by somebody manually. We were able to intercept print streams and capture a lot of information to make the data live. It is remarkable that 20 years later it is still being used – that says a lot about the value and sustainability of NuGenesis. I sold the business to Waters in 2004.

After I sold NuGenesis I was clear I wanted to stay in life sciences but do something different. I went back to many of my clients and ask what problems they are facing – that is when I landed into outsourcing in the area of drug safety, clinical and regulatory.

What was new and different was an area called pharmacovigilance. If you recall, there were a couple of landmark cases related to drugs in the market that had caused deaths. That is when the regulatory agencies realised they do not have a handle around adverse events. They approve drugs, they come to market and years later you start seeing adverse reactions that you did not see during the trial period. The regulatory agencies started mandating reporting of all adverse effects. With the visibility and potential liability, the biopharma industry sprang into action and the flood gates opened to drug safety outsourcing. This was when we launched Sciformix – a scientific knowledge-based outsourcing provider for the life science industry. Any given year when we got to the maturity stage we were doing around 1 million cases. We did everything from cancer drugs to consumer products, from cancer medication to sunscreen lotion. Our success at Sciformix was due to our ability to combine enough science and a very good process. Again, the company grew very rapidly to over 1300-1400 people globally, and it came to a point where it felt like the time was right to divest in 2018. Sciformix was acquired by LabCorp/Covance, a top three CRO (and currently a leader in COVID19 diagnostic testing).

What was the motivation behind the launch of Scitara?

Having done tech, services and global delivery, I thought I should combine these skills and focus on my finale!

Our core team believes Scitara is more than just a business, it is a goal of ours to solve a major problem that still exists in the scientific laboratory: data connectivity. We are pioneering a new digital revolution when it comes to lab data connectivity. We have invented a platform called Scitara DLX (data lab exchange), and our goal with this platform is to connect your instrument, application, or anything else you use in the lab to our platform and we guarantee they can talk to each other.

Our goal is that science labs can log into any system that they are currently using and can access data from any other system that is in the lab. We have a mantra of ‘no application or instrument left behind’. For us to achieve this goal we need cooperation from the industry, which is why I am calling this a finale. It will require all our connections we have made over the years and the reputation we have built to reach out to everyone in the ecosystem. Companies are making significant headway in their digital transformation initiatives, except they do not know how to get their lab data onto their digital platforms, and that is where we come in.

How did you find your entrepreneurial drive?

I am very driven to be independent. I am useless when it comes to working for someone else and fortunately, I have never had to. My personality drives me to try new things and dive into uncertainty and this has always pushed me into something completely new.

The building of my companies motivated me. What excites me is the building from the ground up. Each time the building is easier, but the expectations are higher. I do not build to divest – I build to create value, disrupt, and hopefully deliver a meaningful impact, and the rest takes care of itself.

If anyone comes to me for advice or mentoring, I ask them why? Why do you want to do it? Why you? What is the motivation? That tells a lot very early on about the chances of success that a person may or may not achieve. It does not guarantee success, but if you have a good understanding of the ‘why’ it helps you go a long way. Beyond that I’d say it is important to find a mentor from the industry – people need to recognise that investments happen in teams not necessarily ideas. Do not latch onto an idea too much because things can change.

Create a loyal fanbase, people often think I have 500+ clients, but it is not the number that counts, it is whether you have a handful of loyal clients who make a lot of noise and reopen doors. That becomes exceedingly important.

What would you say makes you a successful entrepreneur?

We do not rely on big sales engines in our industry. It is about building solid connections and networks. When clients learn that I created the concept behind several successful companies, people admire that. There is no better way to connect with a client than something that they are fond of and that I am proud of.

I have learnt the hard way; you build the best partnerships in tough times. When things do not go right, it is how you react that defines not only your relationship but your career as an entrepreneur. I have sold to the same clients across multiple companies. Most of those clients I have had difficult moments with, and it has made our relationship much more resilient.

Having a non-scientific viewpoint has also really helped, particularly when it comes to products. To be able to look at the consumer world, or industrial world or finance world and understand how technology has evolved there and bring those learnings into the scientific world is invaluable.

What does the future hold for Ajit Nagral?

This is the first time after having done this for 20+ years that I have the liberty and luxury to say if this part of my journey were to end, what would be my new journey? It is the first time I have thought about it, and I think it comes with experience and the safety net I have built for me and my family. I am eternally grateful to my customer, employees and investors to put me in this position.

Hopefully Scitara is my last company, as an operating founder. There are many other things I want to do. In addition to being a tech guy I am also a musician. There are things I am doing in music production that I have started already – hopefully in a few years once I am done with Scitara, that is where I will end up!

Industry leader interviews – Andrew Miles. The role of pre-sales demos in successful vendor selection events?