In order to work as intended, this site stores cookies on your device. Accepting improves our site and provides you with personalized service.

Click here to learn more

Click here to learn more

With our sponsorship of SmartLab Exchange Europe and US earlier in 2023, and our sponsorship of FutureLabs this week, we’ve developed a view of key insights on what is happening across the lab informatics industry, and where priorities lie for lab-centred organisations globally. We have also provided insight into the areas budget-holders are looking to invest in new technologies.

Attending conferences globally means that our team can provide key insight to share with fellow informatics peers. Face-to-face interactions provide an opportunity to receive instant feedback and insight into lab informatics trends, which we can extract valuable data from.

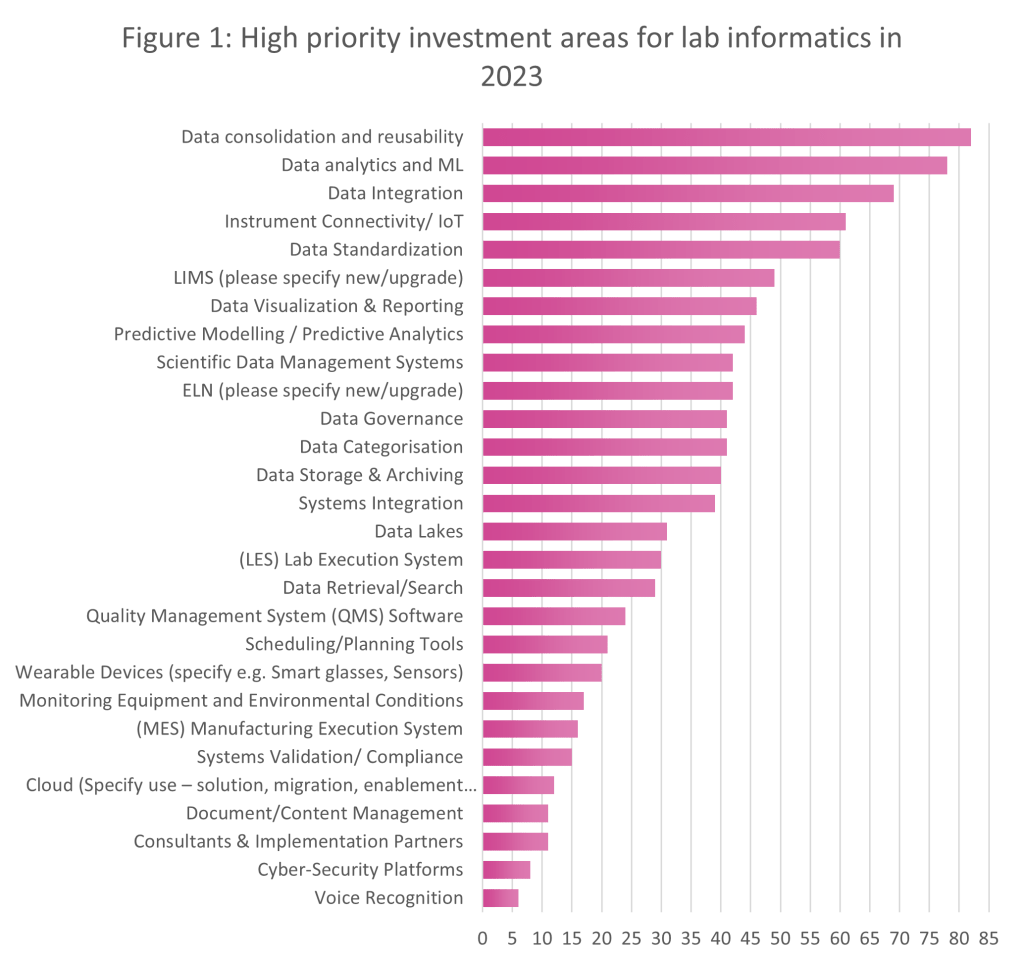

Having spoken to delegates in North America and Europe this year already, we have identified some of the high priority investment areas for lab informatics in 2023 by comparing what is important to event attendees, who include representatives from leading pharma, biotech, material science, crop science, FMCG, and food companies. Of the global companies who attended, more than 120 people were polled:

Figure 1 represents the data from both SmartLab Exchange Europe and US, to give an overall view of lab informatics priorities across the entirety of 2023 thus far:

The graph also demonstrates other key lab informatics investment priorities (from the EU and US summits), and these include:

We can see a real trend towards intelligent systems this year, as data consolidation and reusability take centre stage and budget-holders looks towards automation, both physical and within software systems, to reduce the risks of human and manual errors. This isn’t a trend that’s isolated to a particular lab sector either – we’re seeing similar trends across all sectors.

Extracting feedback from delegates at conferences in all geographies means we can identify patterns in the data in order of priority. While Figure 1 highlights high priority investment areas, Figure 2 shows exactly what delegates at SmartLab Exchange Europe and US are planning to assign budget to in the next 12 months:

From Figure 2, we can see that immediate investment priorities for SmartLab Exchange Europe and US attendees are as follows:

From both events in both geographies, we can see that automation and digitalisation rank highly in terms of investment priorities for 2023. Laboratories are technologically innovating to suit growing capacity and speed to market. Automation also substantially reduces the risk of human error, as repetitive and manual tasks can be carried out with ease using automated solutions.

We also learn that lab users are prioritising areas such as lab scheduling, method development, data governance, connectivity, artificial intelligence (AI), and machine learning (ML). As throughput expectations increase for labs around the world, the need to digitalise and streamline operations is more prevalent than ever. The aim of many laboratories is to increase efficiency within the lab, and digitalisation acts as a catalyst in this process.

You can find our team between Wednesday 31st May – Friday 2nd June at FutureLabs Live, where we’ll be developing more lab informatics insights from fellow sponsors and guests. Stay up to date with our LinkedIn, to be notified of other tradeshows Scimcon is attending this year.

Visit Scimcon at the event and contact us directly to book a conversation, to learn more about how we can support your lab informatics projects.

Industry leader interviews: Jana Fischer?I am Mark Elsley, a Senior Clinical Research / Data Management Executive with 30 years’ experience working within the pharmaceutical sector worldwide for companies including IQVIA, Boehringer Ingelheim, Novo Nordisk and GSK Vaccines. I am skilled in leading multi-disciplinary teams on projects through full lifecycles to conduct a breadth of clinical studies including Real World Evidence (RWE) research. My specialist area of expertise is in clinical data management, and I have published a book on this topic called “A Guide to GCP for Clinical Data Management” which is published by Brookwood Global.

Data quality is a passion of mine and now receives a lot of focus from the regulators, especially since the updated requirements for source data in the latest revision of ICH-GCP. It is a concept which is often ill-understood, leading to organisations continuing to collect poor quality data whilst risking their potential rejection by the regulators.

White and Gonzalez1 created a data quality equation which I think is a really good definition: They suggested that Data Quality = Data Integrity + Data Management. Data integrity is made up of many components. In the new version of ICH-GCP it states that source data should be attributable, legible, contemporaneous, original, accurate, and complete. The Data Management part of the equation refers to the people who work with the data, the systems they use and the processes they follow. Put simply, staff working with clinical data must be qualified and trained on the systems and processes, processes must be clearly documented in SOPs and systems must be validated. Everyone working in clinical research must have a data focus… Data management is not just for data managers!

By adopting effective strategies to maximise data quality, the variability of the data are reduced. This means study teams will need to enrol fewer patients because of sufficient statistical power (which also has a knock-on impact on the cost of managing trials).2 Fewer participants also leads to quicker conclusions being drawn, which ultimately allows new therapies to reach patients sooner.

I believe that clinical trials data are vitally important. These assets are the sole attribute that regulators use to decide whether to approve a marketing authorization application or not, which ultimately allows us to improve patient outcomes by getting new, effective drugs to market faster. For a pharmaceutical company, the success of clinical trial data can influence the stock price and hence the value of a pharmaceutical company3 by billions of dollars. On average, positive trials will lead to a 9.4% increase while negative trials contribute to a 4.5% decrease. The cost of managing clinical trials amounts to a median cost per patient of US$ 41,4134 or US$ 69 per data point (based on 599 data points per patient).5. In short, clinical data have a huge impact on the economics of the pharmaceutical industry.

Healthcare organizations generate and use immense amounts of data, and use of good study data can go on to significantly reduce healthcare costs 6, 7. Capturing, sharing, and storing vast amounts of healthcare data and transactions, as well as the expeditious processing of big data tools, have transformed the healthcare industry by improving patient outcomes while reducing costs. Data quality is not just a nice-to-have – the prioritization of high-quality data should be the emphasis for any healthcare organization.

However, when data quality is not seen as a top priority in health organizations, subsequently large negative impacts can be seen. For example, Public Health England recently reported that nearly 16,000 coronavirus cases went unreported in England. When outputs such as this are unreliable, guesswork and risk in decision making are heightened. This exemplifies that the better the data quality, the more confidence users will have in the outputs they produce, lowering risk in the outcomes, and increasing efficiency.

ICH-GCP8 for interventional studies and GPP9 for non-interventional studies contain many requirements with respect to clinical data so a thorough understanding of those is essential. It is impossible to achieve 100% data quality so a risk-based approach will help you decide which areas to focus on. The most important data in a clinical trial are patient safety and primary end point data so the study team should consider the risks to these data in detail. For example, for adverse event data, one of the risks to consider could include the recall period of the patient if they visit the site infrequently. A patient is unlikely to have a detailed recollection of a minor event that happened a month ago. Collection of symptoms via an electronic diary could significantly decrease the risk and improve the data quality in this example. Risks should be routinely reviewed and updated as needed. By following the guidelines and adopting a risk-based approach to data collection and management, you can be sure that analysis of the key parameters of the study is robust and trust-worthy.

Aside from the risk-based approach which I mentioned before, another area which I feel is important is to only collect the data you need; anything more is a waste of money, and results in delays getting drugs to patients. If you over-burden sites and clinical research teams with huge volumes of data this increases the risks of mistakes. I still see many studies where data are collected but are never analysed. It is better to only collect the data you need and dedicate the time saved towards increasing the quality of that smaller dataset.

Did you know that:

In 2016, the FDA published guidance12 for late stage/post approval studies, stating that excessive safety data collection may discourage the conduct of these types of trials by increasing the resources needed to perform them and could be a disincentive to investigator and patient participation in clinical trials.

The guidance also stated that selective safety data collection may facilitate the conduct of larger trials without compromising the integrity and the validity of trial results. It also has the potential to facilitate investigators and patients’ participation in clinical trials and help contain costs by making more-efficient use of clinical trial resources.

Technology, such as Electronic Health Records (HER) and electronic patient reported outcomes (ePRO), drug safety systems and other digital-based emerging technologies are currently being used in many areas of healthcare. Technology such as these can increase data quality but simultaneously increase the number of factors involved. It impacts costs, involves the management of vendors and adds to the compliance burden, especially in the areas of vendor qualification, system validation, and transfer validation.

I may be biased as my job title includes the word ‘Data’ but I firmly believe that data are the most important assets in clinical research, and I have data to prove it!

Scimcon is proud to support clients around the globe with managing data at its highest quality. For more information, contact us.

1White, Christopher H., and Lizzandra Rivrea González. “The Data Quality Equation—A Pragmatic Approach to Data Integrity.” Www.Ivtnetwork.Com, 17 Aug. 2015, www.ivtnetwork.com/article/data-quality-equation%E2%80%94-pragmatic-approach-data-integrity#:~:text=Data%20quality%20may%20be%20explained. Accessed 25 Sept. 2020.

2Alsumidaie, Moe, and Artem Andrianov. “How Do We Define Clinical Trial Data Quality If No Guidelines Exist?” Applied Clinical Trials Online, 19 May 2015, www.appliedclinicaltrialsonline.com/view/how-do-we-define-clinical-trial-data-quality-if-no-guidelines-exist. Accessed 26 Sept. 2020.

3Rothenstein, Jeffrey & Tomlinson, George & Tannock, Ian & Detsky, Allan. (2011). Company Stock Prices Before and After Public Announcements Related to Oncology Drugs. Journal of the National Cancer Institute. 103. 1507-12. 10.1093/jnci/djr338.

4Moore, T. J., Heyward, J., Anderson, G., & Alexander, G. C. (2020). Variation in the estimated costs of pivotal clinical benefit trials supporting the US approval of new therapeutic agents, 2015-2017: a cross-sectional study. BMJ open, 10(6), e038863. https://doi.org/10.1136/bmjopen-2020-038863

5O’Leary E, Seow H, Julian J, Levine M, Pond GR. Data collection in cancer clinical trials: Too much of a good thing? Clin Trials. 2013 Aug;10(4):624-32. doi: 10.1177/1740774513491337. PMID: 23785066.

6Khunti K, Alsifri S, Aronson R, et al. Rates and predictors of hypoglycaemia in 27 585 people from 24 countries with insulin-treated type 1 and type 2 diabetes: the global HAT study. Diabetes Obes Metab. 2016;18(9):907-915. doi:10.1111/dom.12689

7Evans M, Moes RGJ, Pedersen KS, Gundgaard J, Pieber TR. Cost-Effectiveness of Insulin Degludec Versus Insulin Glargine U300 in the Netherlands: Evidence From a Randomised Controlled Trial. Adv Ther. 2020;37(5):2413-2426. doi:10.1007/s12325-020-01332-y

8Ema.europa.eu. 2016. Guideline for good clinical practice E6(R2). [online] Available at: https://www.ema.europa.eu/en/documents/scientific-guideline/ich-e-6-r2-guideline-good-clinical-practice-step-5_en.pdf [Accessed 10 May 2021].

9Pharmacoepi.org. 2020. Guidelines For Good Pharmacoepidemiology Practices (GPP) – International Society For Pharmacoepidemiology. [online] Available at: https://www.pharmacoepi.org/resources/policies/guidelines-08027/ [Accessed 31 October 2020].

10Medical Device Innovation Consortium. Medical Device Innovation Consortium Project Report: Excessive Data Collection in Medical Device Clinical Trials. 19 Aug. 2016. https://mdic.org/wp-content/uploads/2016/06/MDIC-Excessive-Data-Collection-in-Clinical-Trials-report.pdf

11O’Leary E, Seow H, Julian J, Levine M, Pond GR. Data collection in cancer clinical trials: Too much of a good thing? Clin Trials. 2013 Aug;10(4):624-32. doi: 10.1177/1740774513491337. PMID: 23785066.

12FDA. Determining the Extent of Safety Data Collection Needed in Late-Stage Premarket and Postapproval Clinical Investigations Guidance for Industry. Feb. 2016.

Digital transformation: Revolutionising the labs of the future?